The Disciplines

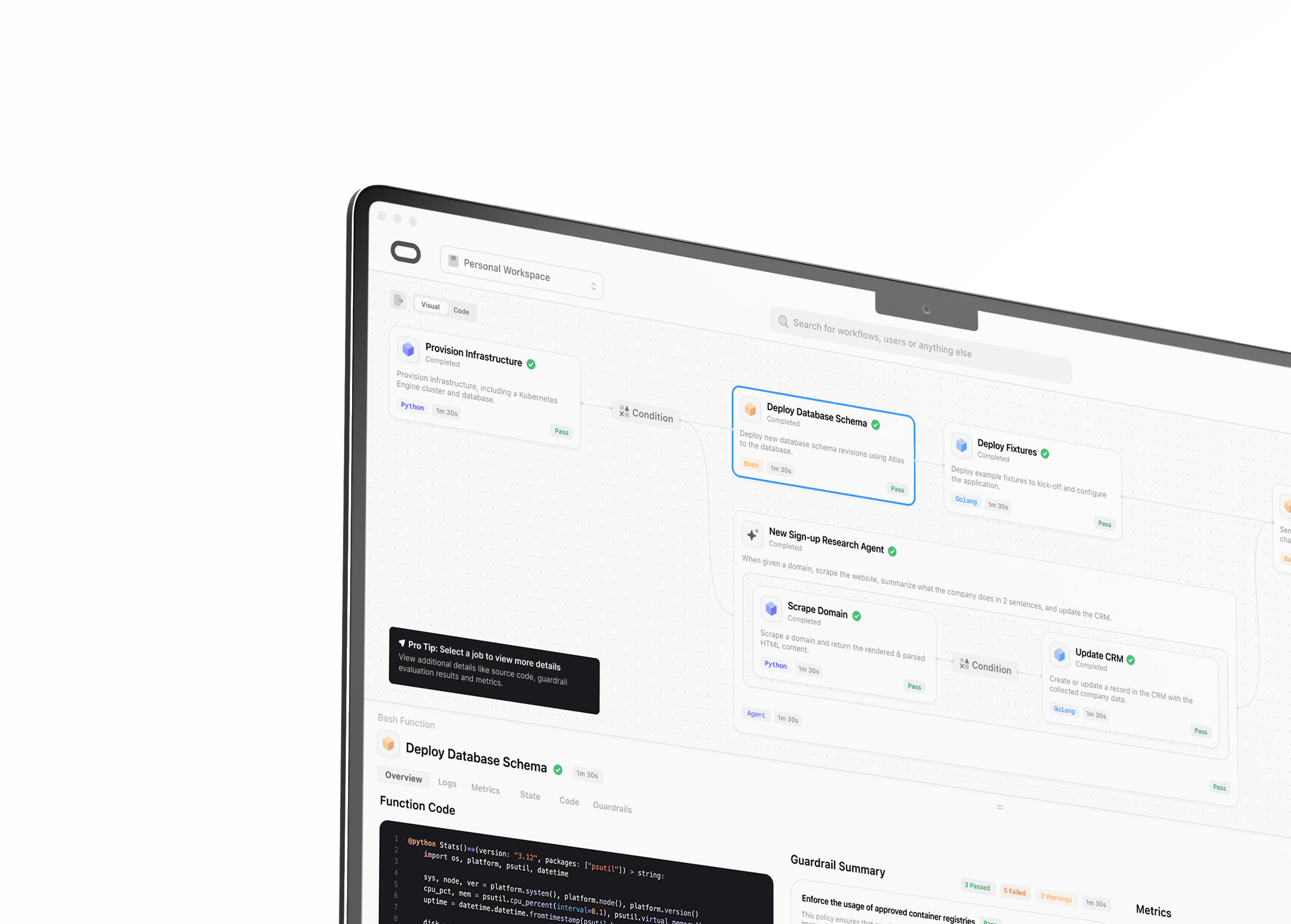

All your workflows. One language.

Orchestrate machine learning, data pipelines, and infrastructure & application deployments using a single unified surface.

The Pillars

For every team, everywhere.

Empower every discipline to execute everything from CI/CD pipelines to machine learning training jobs seamlessly across the globe.

One platform. Every workflow.

Unify any workflow on a single, elegantly simple platform.

Chat your workflows into existence.

The world is your runtime.

Map every step to the perfect machine. Run it on any continent. Effortlessly.

Build together. In perfect sync.

Empower cross-functional teams to collaborate in a shared workspace.

The Platform

Limitless power. Zero friction.

Orchestrate every component of your architecture through a perfectly unified experience.

One language. Every discipline.

Unite every engineering team on a single orchestration surface. Build CI/CD pipelines and machine learning jobs on the same platform without switching contexts.

Intelligence, delegated.

Write the workflow yourself, or hand your multi-runtime functions over to an AI agent and let it dynamically chart the execution path

The Language

Code without boundaries.

Master your distributed, polyglot reality using one elegant, universally declarative language.

The Theory

Our Manifesto

The modern engineering landscape is fractured by a fragmentation tax that forces developers to bridge the gap between machine learning models, infrastructure code, and disparate runtimes using fragile, trigger-based tools like GitHub Actions and Airflow. This technical debt creates invisible walls where state cannot flow easily between disciplines, and the cognitive load of switching between execution models stifles innovation. Theory is born from the necessity to bring these engineering disciplines back together under a single, declarative surface. By providing a unified orchestration language that treats every runtime—from Python and Rust to Bash and Containers—as a first-class citizen, Theory dissolves the boundaries between infrastructure, model training, and data processing.

Theory replaces the rigid, fragmented orchestrators of the past with a distributed execution operating system designed for the polyglot reality. We are not replacing specialized, industry-standard tools like TensorFlow or Terraform; instead, we are providing the professional-grade nervous system they run on. In a standard stack, moving data from a data pipeline to a CI/CD pipeline requires custom APIs or manual serialization. Theory eliminates this overhead with a universal type system that allows a result from a Python-based TensorFlow job to be passed directly into a Go or Bash-based Terraform script without manual, error-prone JSON parsing.

The platform functions as a stateful central brain that manages variable scope and execution logic across a global fleet. Every transition within a workflow is fully persistent, ensuring that the system maintains a perfect record of the program’s state at all times. This ensures total durability; if an underlying system failure occurs, the platform can resume a workflow exactly where it left off, ensuring no lost state and no silent failures. This reliability allows engineers to focus on the logic of their work rather than the stability of the underlying infrastructure.

The execution model is designed to be distributed by default, managing the lifecycle of work across diverse compute environments. The platform intelligently routes tasks to the most appropriate resources, whether that requires high-performance hardware for heavy computation or lightweight environments for administrative tasks. To ensure high performance, the system maintains ready-to-use environments that eliminate the latency typically associated with starting new tasks. This allows a single Theory workflow to orchestrate a complex sequence of events, from training an AI model to deploying the cloud resources it requires, all within a unified execution window.

By replacing the fragmented overhead of legacy CI/CD and workflow tools, Theory returns engineering hours to what actually matters: building products. We are moving beyond the era of specialized silos into an age of universal orchestration where asynchrony and distribution are baked into the syntax rather than bolted on as an afterthought. Theory provides the grounded, supportive, and scalable foundation for any engineering discipline to execute any workflow on the same platform. It is the fundamental layer that allows diverse tools to speak the same language, ensuring that the only limit to an engineering pipeline is the logic of the theory itself.

Tom Gouder

Founder

THEORY BETA ACCESS

Sign-up for Early Access